Alternate destination is depreciated in 12.2 for remote locations.

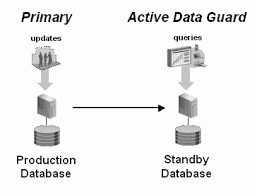

This diagram shows the use of an alternate destination for sending logs.

An alternate destination can be local to the database (to be used if the disk location fills up), but they can also be used to change the remote destination that the logs get sent to.

Configuring an "ALTERNATE" for a local destination is still supported

Below is what this looks like from the 18C manual.

In this example, if /disk1 is not available (fills up, etc). the database will switch to sending logs to /disk2 so that the database will not get hung.

HOWEVER, for remote destinations AKA destinations using "SERVICE" , this changes with 12.2

12.2 introduces the idea of "GROUP" and "PRIORITY". in place of a simple "ALTERNATE".

I'm sure this was added for Far Sync but it also comes into play with the ZDLRA.

Using only "ALTERNATE" gives you the ability to switch to a single remote destination. Using GROUP and PRIORITY lets you set multiple destinations and decide the order in which they are used.

Now let's start with simple example with FAR SYNC.

I have 2 locations. San Francisco (SF) is Primary with 2 local farsync servers, and my remote destination is New York (NYC).

I can group them together and set the priority that they use. I can even switch from sync to async. Below is what the new syntax would look like for the destinations.

This behaves in the following manner.

This is an interesting change to the ALTERNATE setting on remote destinations.

This diagram shows the use of an alternate destination for sending logs.

An alternate destination can be local to the database (to be used if the disk location fills up), but they can also be used to change the remote destination that the logs get sent to.

Configuring an "ALTERNATE" for a local destination is still supported

Below is what this looks like from the 18C manual.

LOG_ARCHIVE_DEST_1='LOCATION=/disk1 MANDATORY MAX_FAILURE=1

ALTERNATE=LOG_ARCHIVE_DEST_2'

LOG_ARCHIVE_DEST_STATE_1=ENABLE

LOG_ARCHIVE_DEST_STATE_2=ALTERNATE

LOG_ARCHIVE_DEST_2='LOCATION=/disk2 MANDATORY'

In this example, if /disk1 is not available (fills up, etc). the database will switch to sending logs to /disk2 so that the database will not get hung.

HOWEVER, for remote destinations AKA destinations using "SERVICE" , this changes with 12.2

12.2 introduces the idea of "GROUP" and "PRIORITY". in place of a simple "ALTERNATE".

I'm sure this was added for Far Sync but it also comes into play with the ZDLRA.

Using only "ALTERNATE" gives you the ability to switch to a single remote destination. Using GROUP and PRIORITY lets you set multiple destinations and decide the order in which they are used.

Now let's start with simple example with FAR SYNC.

I have 2 locations. San Francisco (SF) is Primary with 2 local farsync servers, and my remote destination is New York (NYC).

I can group them together and set the priority that they use. I can even switch from sync to async. Below is what the new syntax would look like for the destinations.

LOG_ARCHIVE_DEST_2='SERVICE=FARSYNC1 SYNC GROUP=1 PRIORITY=1'

LOG_ARCHIVE_DEST_3='SERVICE=FARSYNC2 SYNC GROUP=1 PRIORITY=1'

LOG_ARCHIVE_DEST_4='SERVICE=NYC ASYNC GROUP=1 PRIORITY=2’

This behaves in the following manner.

- My database in San Francisco sends archive logs to EITHER of the two services specified as PRIORITY=1. The log could go to FARSYNC1 or FARSYNC2 and get passed on to NYC.

- If either of these 2 PRIORITY=1 destinations becomes unavailable the other will continue to work.

- If both of these 2 PRIORITY=1 destinations become unavailable, the redo will be sent to NYC as ASYNC.

- If NYC is the destination (because of a double failure), it will switch back to the either of the 2 FARSYNC services when they become available.

You can add up to 8 layers of PRIORITY and include a remote FARSYNC server if you would like.

Now, what does this have to do with ZDLRA ?

You can also use an alternate destination for redo traffic on the ZDLRA. The alternate can be the downstream ZDLRA if replication is used, or it can be the upstream ZDLRA if store-and-forward is being used. These are outlined in the ZDLRA admin guide under "Implementing Additional High Availability Strategies".

In the case of the ZDLRA you would use 2 destinations.

The first destination is the primary ZDLRA, and the second destination is the alternate ZDLRA.

The configuration would look like this.

LOG_ARCHIVE_DEST_2='SERVICE=ZDLRA_SF_SCAN:1521/ZDLRASF:dedicated" ASYNC GROUP=1 PRIORITY=1'

LOG_ARCHIVE_DEST_3='SERVICE=ZDLRA_NYC_SCAN:1521/ZDLRANYC:dedicated" ASYNC GROUP=1 PRIORITY=2'

This is an interesting change to the ALTERNATE setting on remote destinations.