In this post I will go through what happens with Archive log Backupsets sent to the ZDLRA through log sweeps.

When you implement ZDLRA you have 2 choices in backing up archive logs.

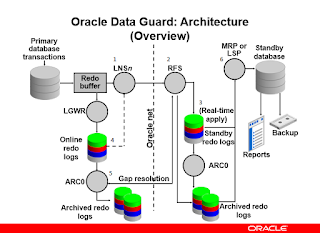

1) Use real-time redo transport (RRT) which is the same mechanism that is used to send archive logs to a standby database.

2) Use traditional log sweeps (RMAN) that pick up the archive logs and send them to the ZDLRA as backupsets.

Today I am going to go through the second option, using RMAN log sweeps.

Before I go into detail please refer to this MOS note to ensure you understand best practice for backing up a database to the ZDLRA.

RMAN best practice recommendations for backing up to the Recovery Appliance (Doc ID 2176686.1)

As of writing this post, the best practice is

backup device type sbt cumulative incremental level 1 filesperset 1 section size 64g database plus archivelog filesperset 32 not backed up;

When you execute the best practice command, there are 2 pieces to this backup script.

Database Backup - The best practice is filesperset=1 and section size 64G. This ensures that a large datafile backup (big file) is broken up into pieces, and each backup piece contains only a single datafile. This allows the virtualization process to start as soon as each backup piece is received

Archivelog Backup - Best practice is to use filesperset=32 and only backup archivelogs that have not been backed up.

Now to walk through the archive log backup process:

RMAN will create a backupset of 32 archive logs. This backupset will be sent to the ZDLRA (through the libra.so library) and will be written to physical disk on the ZDLRA. The RMAN catalog on the ZDLRA will be immediately updated with the location of the backupset.

Since there is no processing done on the ZDLRA once received (beyond what the RMAN client does), the file is written "as is" on the ZDLRA.

So what why do I point this out ? As you may know the ZDLRA compresses Datafile backups received, but it does not compress archivelog backupsets through RMAN. If you want your archivelog backupset compressed (that came to the ZDLRA through an RMAN log sweep) you must perform compression through RMAN before sending the archive logs.,

There are a few items to think about before you rush into immediately compressing archive logs.

The first of which (and probably most important to your company) is that RMAN compression, other than basic (which is NOT recommended) requires the ACO (advanced Compression) option (license). If the databases you support are NOT licensed for ACO usage, then you should stop right here, and consider using real-time redo. Real-time redo can use all levels of compression without the ACO because the compression is done on the ZDLRA. This will be my next blog post.

#1 - ACO is required for RMAN compression. Use real-time redo to compress on the ZDLRA without the ACO license

The second thing to think about is what level of compression. Below is some example compression ratio AND timings that have been achieved to give you an idea of the differences. Of course every one's data is different, so your mileage could vary. This does give you an idea however.

BASIC - The elapsed time is 5x longer than it is for NOCOMP. I would absolutely not recommend using BASIC compression.

LOW - The elapsed time was actually less than NOCOMP, most likely due to sending less traffic. The backup ratio was roughly 2:1 giving a great balance of similar execution time and reasonable compression

MEDIUM - The elapsed time was triple (3x) that of LOW or NOCOMP. The compression ratio was slightly better, but not significant.

HIGH - The elapsed time was 24x longer than it is for NOCOMP, and the compression ratio was only slightly better. I would absolutely not recommend using HIGH compression

#2 - LOW compression offers the best balance between elapsed time, and compression ratio.

As I point out that compression of archive logs is a good thing, there as a BIG CAVEAT to this. The ZDLRA has its own compression of datafile backups. The ZDLRA compression is of each individual block, NOT the backupset. Because of this RMAN compression of datafiles is not recommended, and if TDE is implemented this will cause backups not to virtualize. The 2 items are.

- The ZDLRA will uncompress the RMAN backupset and recompress the blocks once virtualized.

- TDE data will not be virtualized since RMAN compression re-encrypts the backupset.

RMAN> CONFIGURE DEVICE TYPE 'SBT_TAPE' BACKUP TYPE TO BACKUPSET;

RMAN> CONFIGURE DEVICE TYPE 'SBT_TAPE' BACKUP TYPE TO BACKUPSET;

run

{

backup as compressed backupset filesperset 8 archivelog all not backed up delete input;

backup as backupset cumulative incremental level 1 filesperset 1 section size 128G database;

backup as compressed backupset filesperset 8 archivelog all not backed up delete input;

}

run

{

backup as compressed backupset filesperset 8 archivelog all not backed up delete input;

}